Alibaba Group's Institute for Intelligent Computing is revolutionizing the world of digital animation by bringing photos to life with its latest innovation, "Animate Anyone."

Led by a team of experts, the institute developed a cutting-edge method to transform still images into high-quality animated videos.

Existing animation technology has long dealt with issues such as distortion and inconsistency, both of which are challenges Alibaba's innovation aims to solve.

The key breakthrough lies in the advanced computer models known as diffusion models, a technology often seen as the go-to method for digital image and video generation.

To create realistic animations, "Animate Anyone" contains two essential components:

- ReferenceNet, which captures intricate appearance details from the reference image, maintaining consistency throughout the animation

- Pose Guider, which directs realistic and fluid movements in the video, ensuring a seamless experience

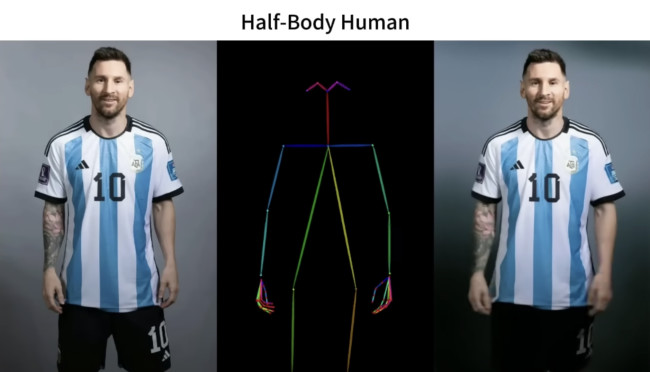

This approach can animate various characters, from human figures to cartoons and humanoid figures, to create visually stunning and temporally consistent results.

How Does 'Animate Anyone' Tech Work?

Alibaba's team tested their method in fashion video synthesis and human dance generation, demonstrating its ability to turn static fashion photographs into realistic, animated videos.

The training process, conducted in two stages, involved an internal dataset of 5,000-character video clips. The first stage focused on individual frames, excluding the temporal layer, while the second introduced the temporal layer for training on 24-frame video clips.

"To preserve the consistency of intricate appearance features from reference images, we design ReferenceNet to merge detail features via spatial attention. To ensure controllability and continuity, we introduce an efficient pose guider to direct character's movements and employ an effective temporal modeling approach to ensure smooth inter-frame transitions between video frames," the study's abstract wrote.

In one of its examples, it used a static image of renowned football player Leonel Messi and animated it seamlessly to appear as if Messi was performing a TikTok dance.

Despite the technology's superiority in maintaining character details and generating smooth movements, it's not without limitations.

Challenges include difficulties in handling highly stable hand movements and animating unseen parts during motion. In the same example, while Messi's hands appear to be moving fluidly, his tattoo sleeve is seen to be morphing in shape, along with the movements, making it appear less realistic the more elements there are.

One X user believes the model may take one to two more months of refinements to yield better results before its wide rollout.

I doubt the output will be this good when they release it to public ..

— Sambhav Gupta (@sambhavgupta6) December 3, 2023

These are probably the best selective examples and probably after many iterations

But then again give it a month or two 🙃

While the technology holds immense promise, its release is pending, and the team has yet to publish the code for public use.

-preview.jpg)